|

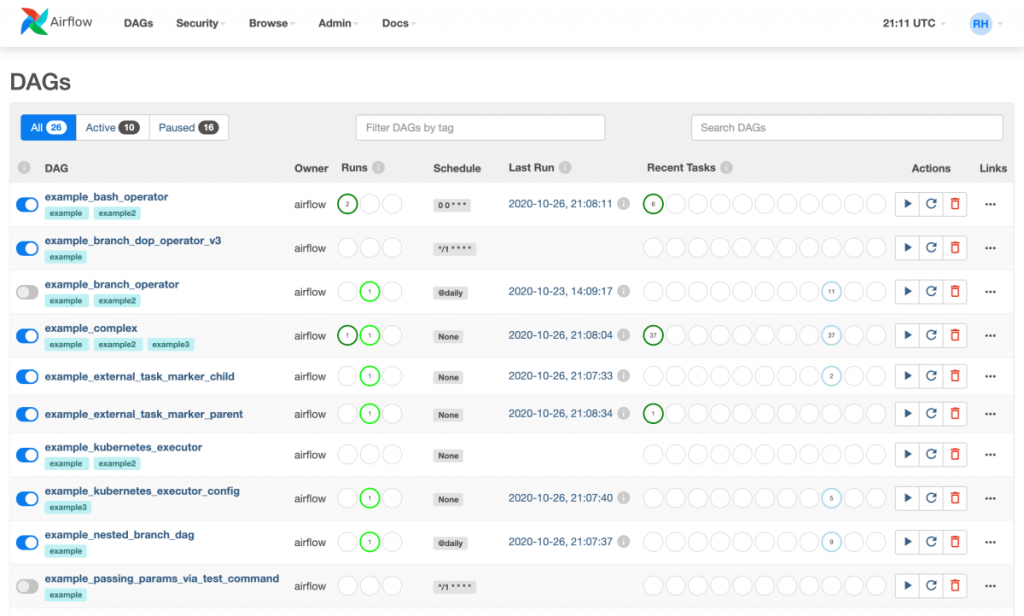

Package the Kedro pipeline as an Astronomer-compliant Docker image. """ # Tutorial Documentation Documentation that goes along with the Airflow tutorial located () """ # from datetime import timedelta from textwrap import dedent # The DAG object we'll need this to instantiate a DAG from airflow import DAG # Operators we need this to operate! from import BashOperator from airflow.utils. This tutorial explains how to deploy a Kedro project on Apache Airflow with. Implements CRUD (Create, Update, Delete) operations on all Airflow resources. Additionally, the new API: Makes for easy access by third-parties.

See the License for the # specific language governing permissions and limitations # under the License. Airflow 2.0 introduced a new, comprehensive REST API that set a strong foundation for a new Airflow UI and CLI in the future. You may obtain a copy of the License at # Unless required by applicable law or agreed to in writing, # software distributed under the License is distributed on an # "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY # KIND, either express or implied. Also, check my previous post on how to install Airflow 2 on a Raspberry Pi. It entails knowledge of some terms, so here’s a great place to refresh memory. The ASF licenses this file # to you under the Apache License, Version 2.0 (the # "License") you may not use this file except in compliance # with the License. This blog post is part of a series where an entire ETL pipeline is built using Airflow 2.0’s newest syntax and Raspberry Pis. See the NOTICE file # distributed with this work for additional information # regarding copyright ownership. Here's the code to get some data, I added the connection via the UI on the connections screen in Airflow.įrom import PythonOperator, get_current_context, taskįrom .hooks.mssql import MsSqlHookĬonn = MsSqlHook.get_connection(conn_id="mssql_default")ĭf = hook.get_pandas_df(sql="SELECT top 5 * FROM dbo.# Licensed to the Apache Software Foundation (ASF) under one # or more contributor license agreements. As the changelog is quite large, the following are some notable new features that shipped in this release. It contains over 600 commits since 2.1.4 and includes 30 new features, 84 improvements, 85 bug fixes, and many internal and doc changes. Įcho -e "AIRFLOW_IMAGE_NAME=custom/apache:2.0.1" >. This tutorial provides a step-by-step guide through all the crucial concepts of deploying Airflow 2.0 on Ubuntu 20.04 VPS (I am using DigitalOcean droplet) and with the help of NGINX to run. 2.1K Share 109K views 2 years ago Apache Airflow Airflow 2. py 20 import sys, os, re from airflow import DAG from. I’m proud to announce that Apache Airflow 2.2.0 has been released. RUN pip install apache-airflow-providers-microsoft-mssqlīuild the new image and set up the env var to use it in the docker-compose as specified in the tutorial docker build -t search/apache:2.0.1 -f. RUN pip install apache-airflow-providers-microsoft-azure=1.2.0rc1 It as a bug, so i needed to add apache-airflow-providers-microsoft-azure too.

I needed to add a few packages to get the mssql connection working so i extended the docker image. This tutorial builds on the regular Airflow Tutorial and focuses specifically on writing data pipelines using the TaskFlow API paradigm which is introduced as part of Airflow 2.0 and contrasts this with DAGs written using the traditional paradigm. Much appreciation to all that responded regardless. I'm answering my own question here, mainly because my initial question didn't give people a lot to go on.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed